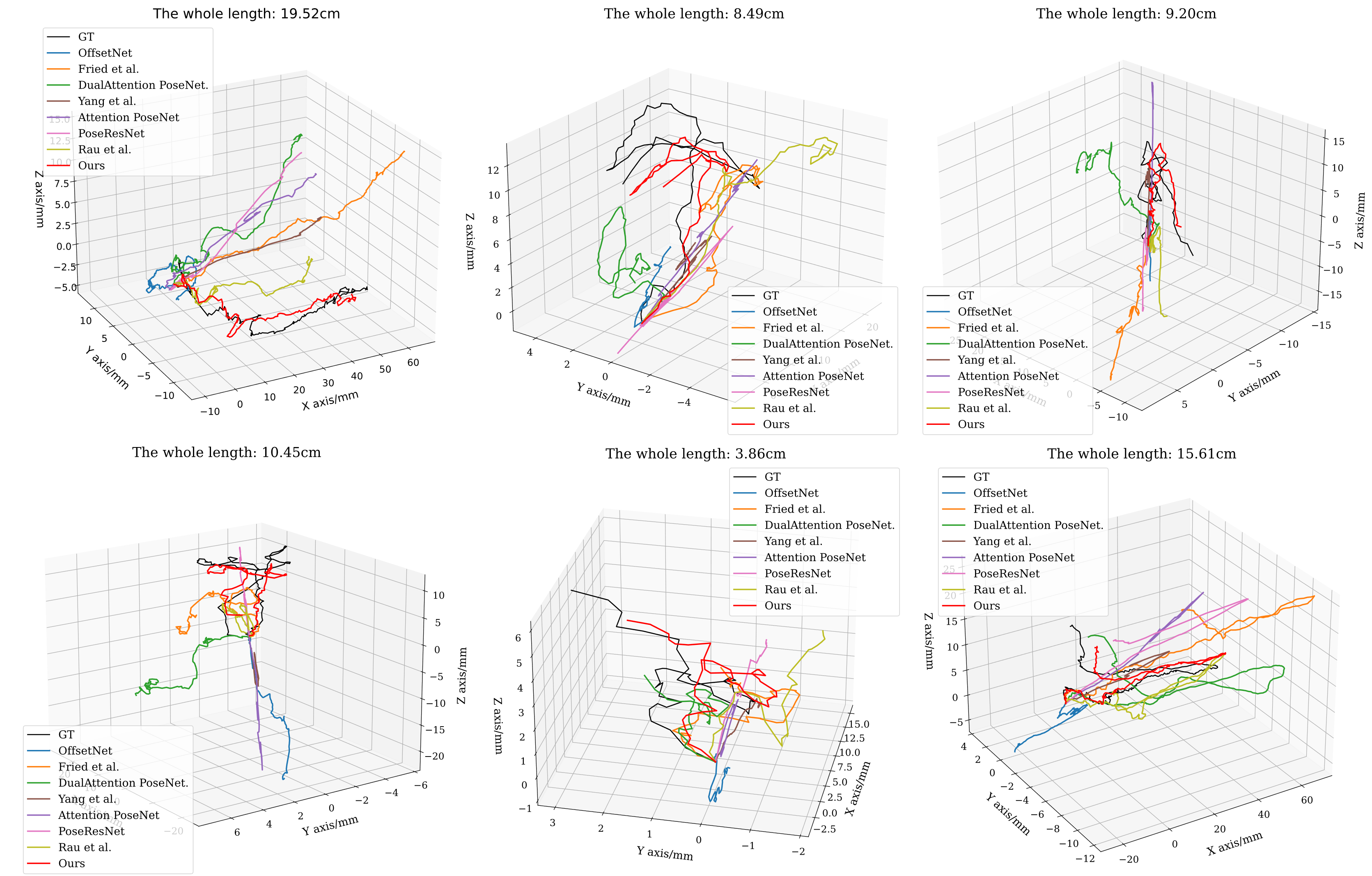

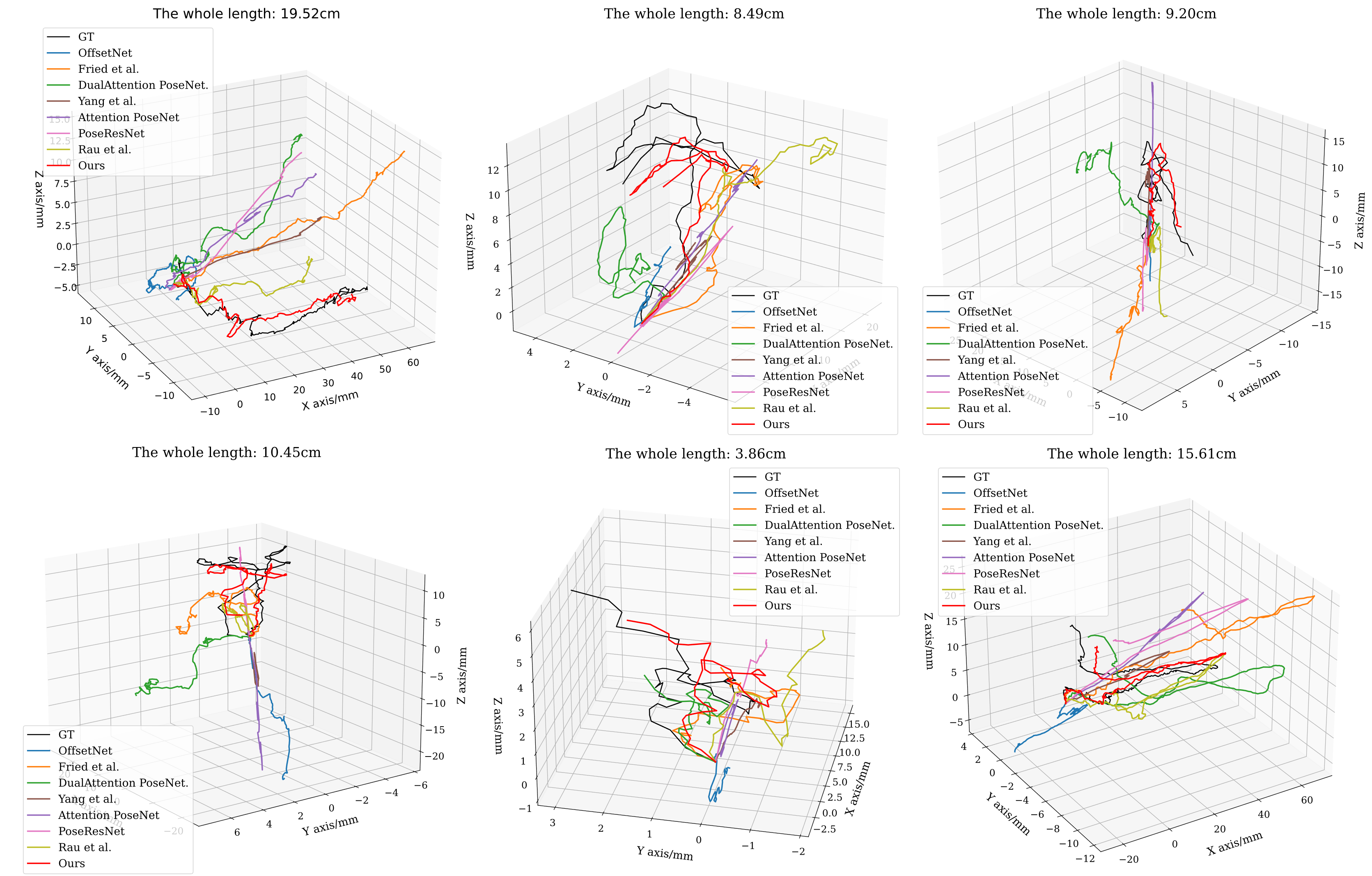

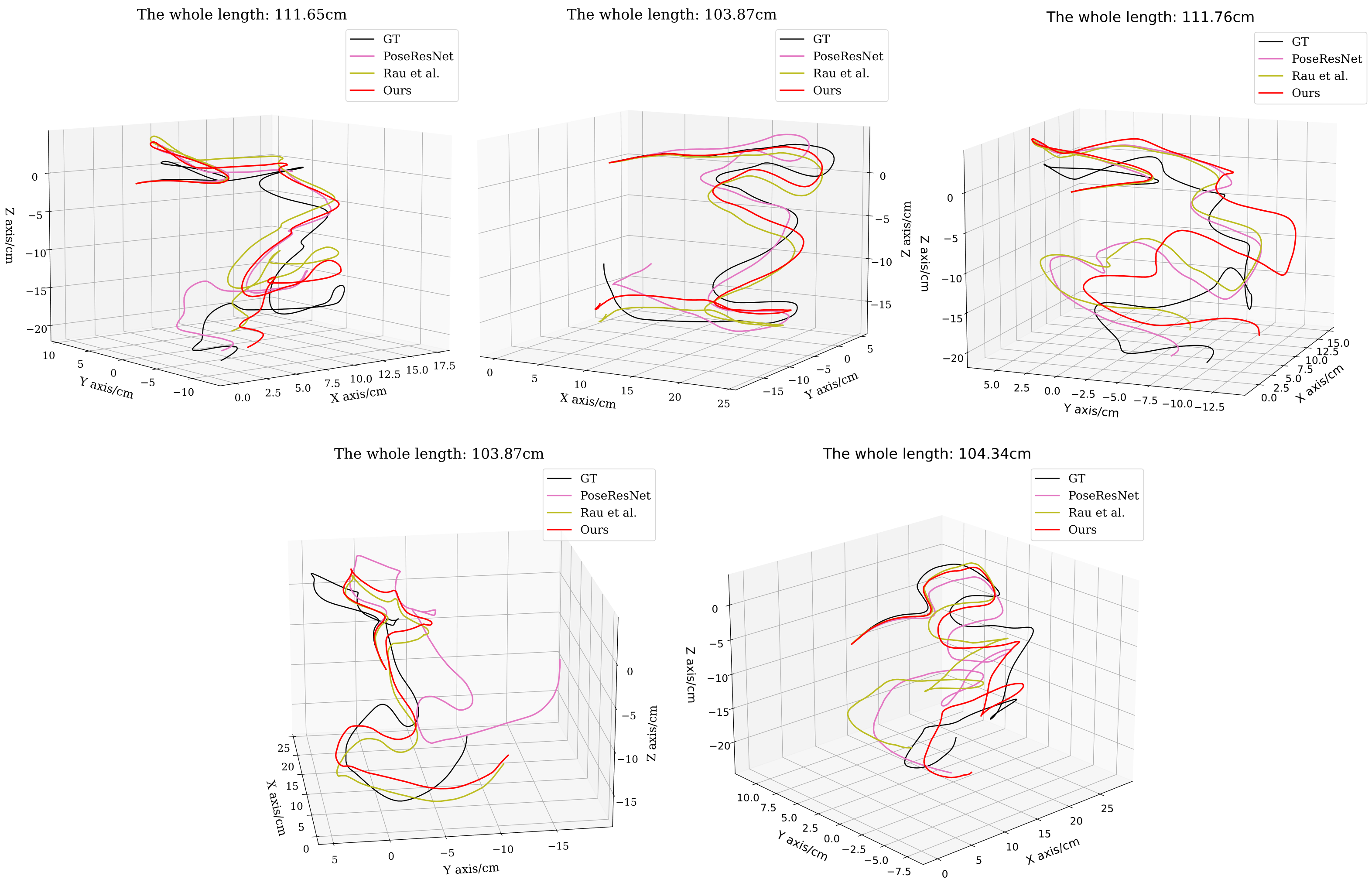

Generated Trajectories from NEPose based on ego-motion tracking

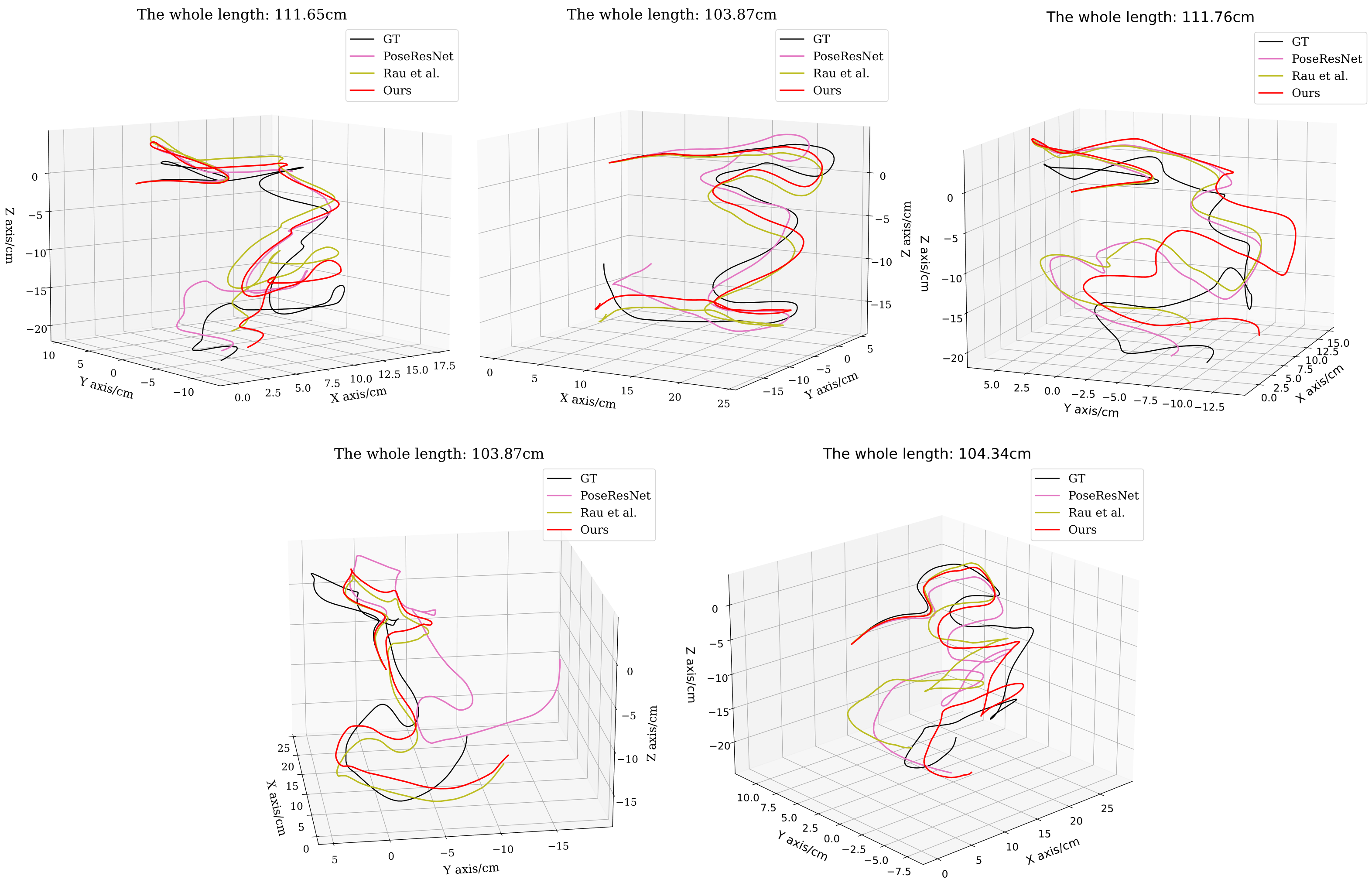

Generated Trajectories from SimCol based on ego-motion tracking

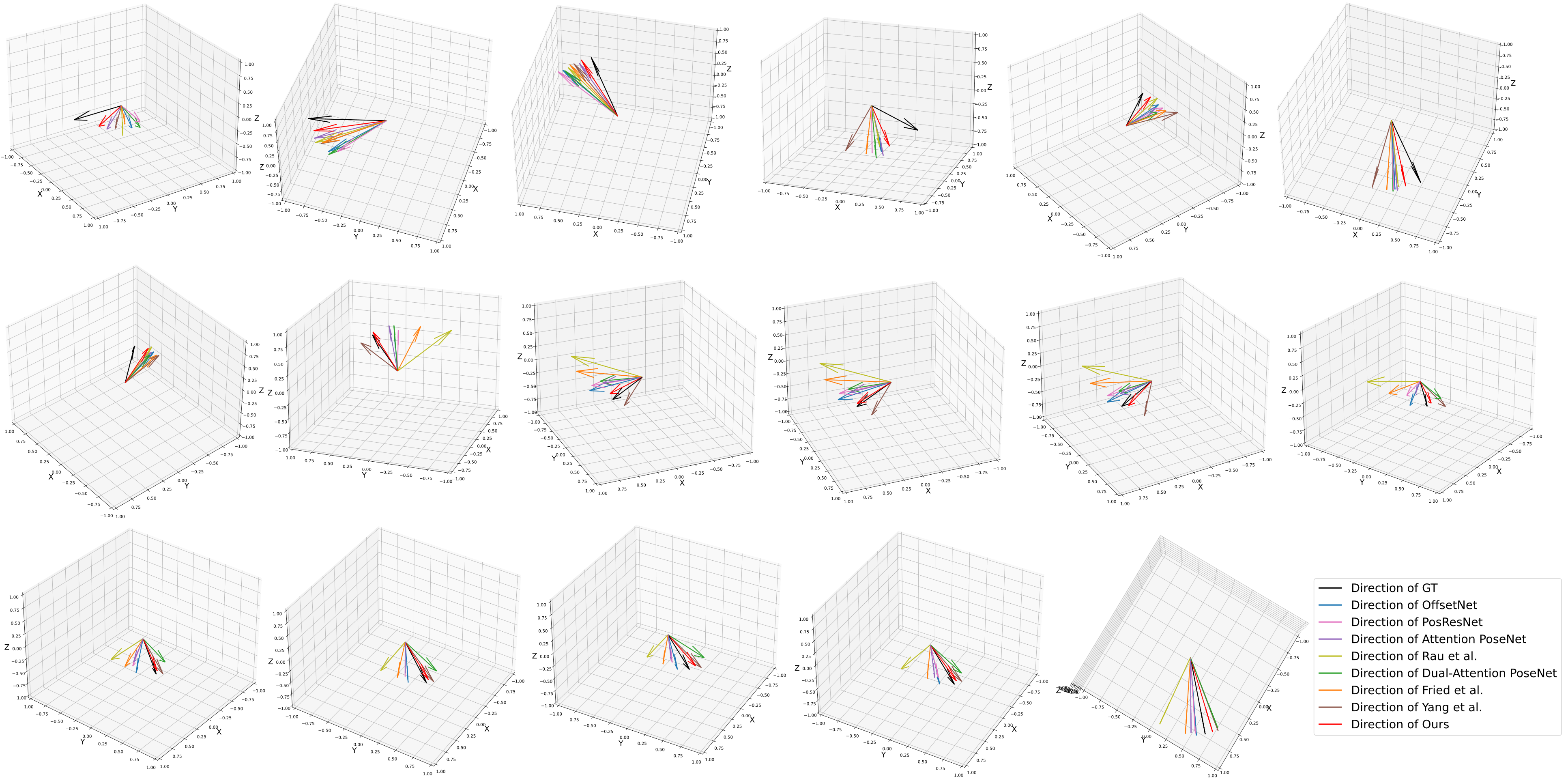

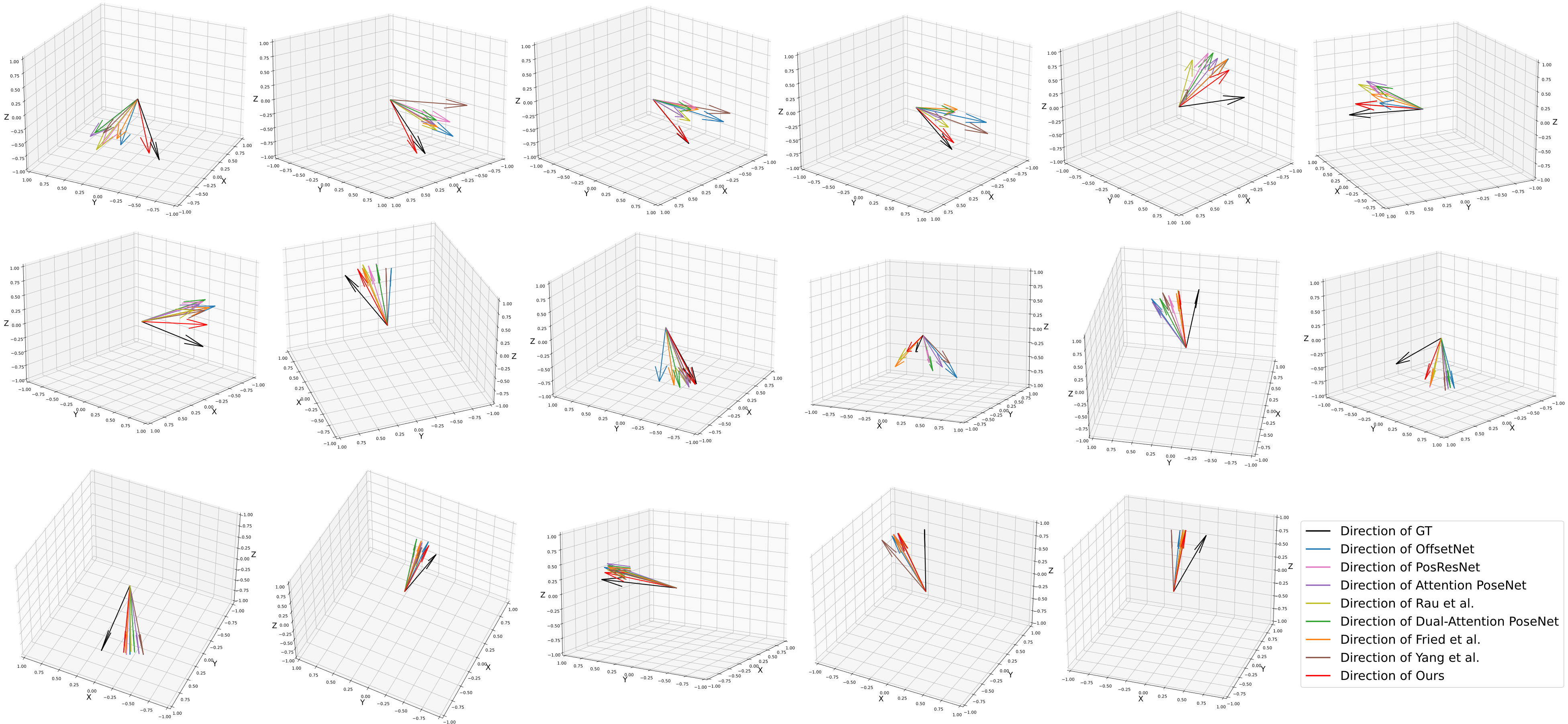

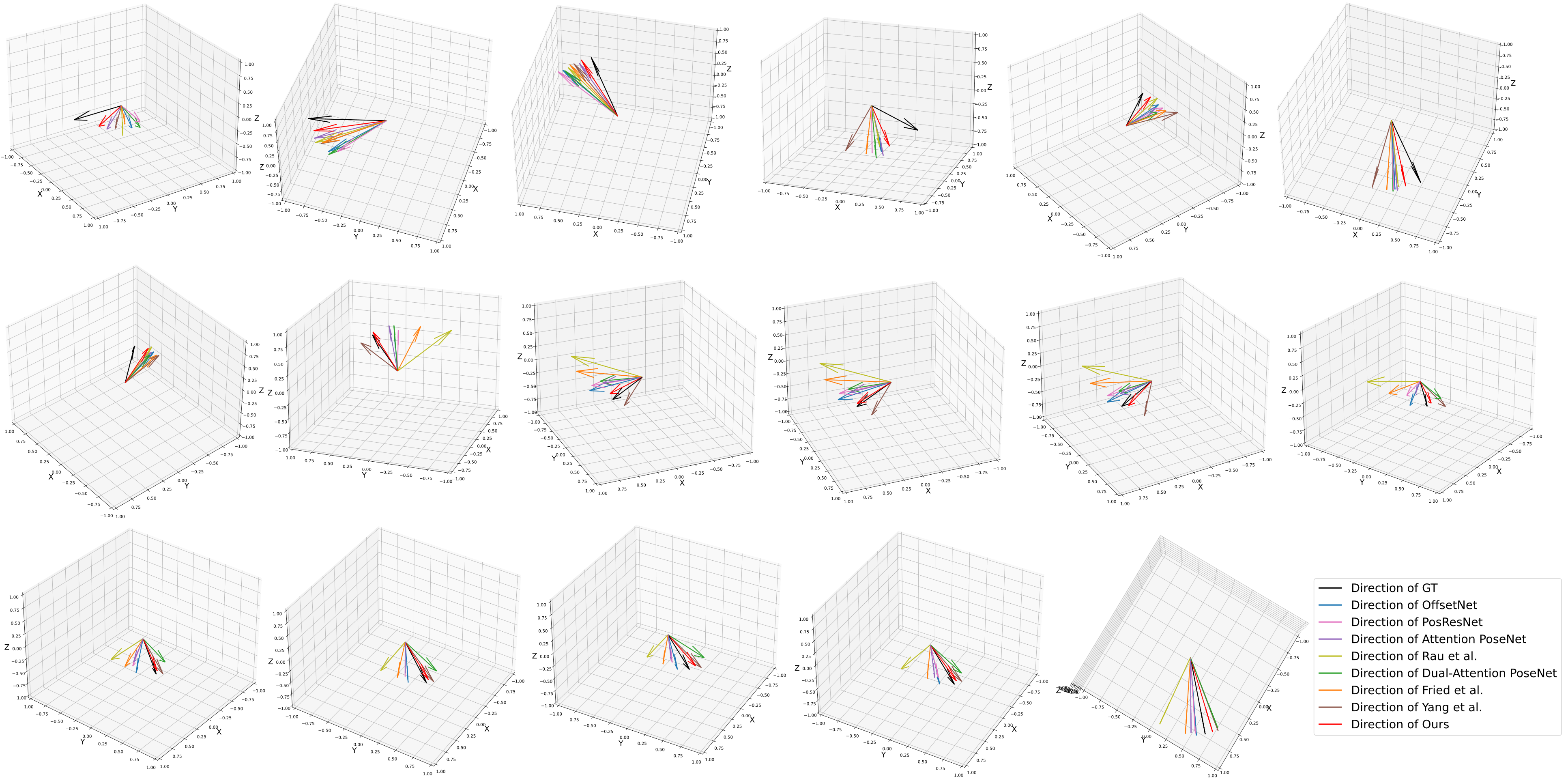

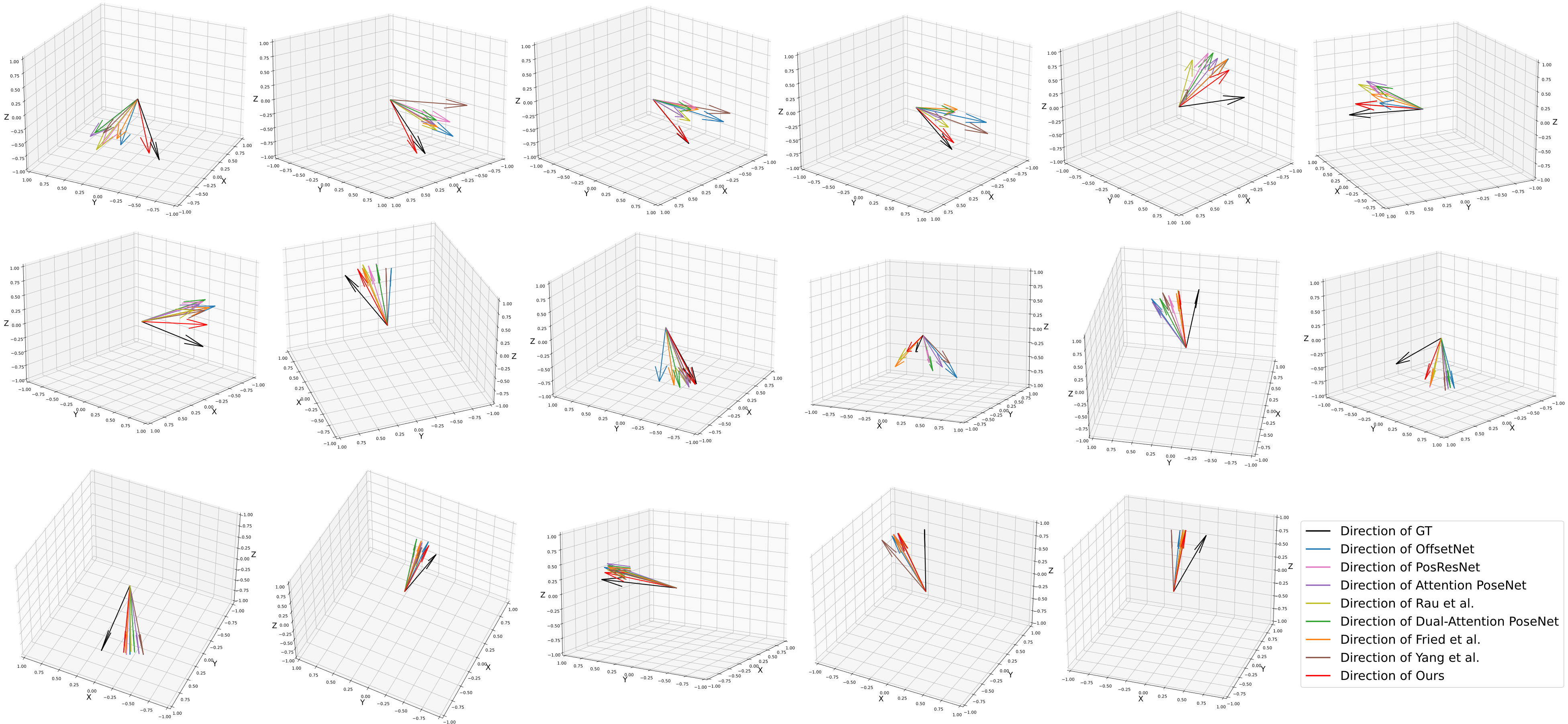

Predicted Directions from SimCol based on ego-motion tracking

Real-time ego-motion tracking for endoscope is a significant task for efficient navigation and robotic automation of endoscopy. In this paper, a novel framework is proposed to perform real-time ego-motion tracking for endoscope. Firstly, a multi-modal visual feature learning network is proposed to perform relative pose prediction. In particular, the scene feature is extracted from the current observation and the previous frame. Simultaneously, the motion feature is extracted from the optical flow, which is obtained by a pretrained high-speed network. Moreover, a feature extractor is designed based on attention mechanism to extract multi-dimensional information from the concatenation of two continuous frames. At the end of the network, a pose decoder is proposed to predict the pose transformation from the concatenated feature map. At last, the absolute pose of endoscope is calculated based on relative poses. In the experiments, the proposed method outperforms state-of-the-art methods on three datasets of various endoscope. Besides, based on experiments on three datasets, the inference speed of the proposed method is over 30 frames per second, which achieves the real-time requirement.